1、Ingress-Controller高可用 Ingress Controller 是集群流量的接入层,对它做高可用非常重要,可以基于keepalive实现nginx-ingress-controller高可用,具体实现如下:

Ingress-controller根据Deployment+ nodeSeletor+pod反亲和性方式部署在k8s指定的两个work节点,nginx-ingress-controller这个pod共享宿主机ip,然后通过keepalive+lvs实现nginx-ingress-controller高可用

1 2 https://github.com/kubernetes/ingress-nginx -nginx /tree/main/deploy/static /provider/baremetal

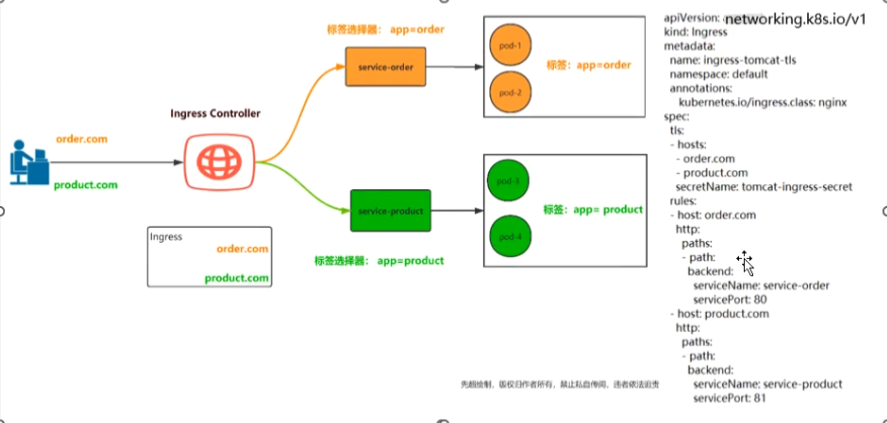

Ingree Controller 控制器,它通过不断的跟kubernetes API交互,实时获取后端Service、Pod的变化,比如新增、删除等,结合Ingree定义的规则生成配置,然后动态更新上面的Nginx或者trafik负载均衡器,并刷新使配置生效,来达到服务自动发现的作用。

Ingress则是定义规则,通过它定义某个域名的请求过来之后转发到集群中指定的Service。它可以通过yaml文件定义,给一个或者多个service定义和一个或者多个Ingress规则

Ingress Controller代理流程

1、部署Ingress controller(nginx)

2、创建pod应用,通过控制器创建pod

3、创建service,用来分组pod

4、创建Ingress http,测试通过http访问应用

5、创建Ingress https,测试通过https访问应用

1、通过nginx与keepalived实现nginx-ingress-controller高可用 1、在node节点上打上标签 1 2 3 4 5 6 [root@master1 ~]

2、在node节点导入所需镜像 1 2 docker load -i kube-webhook-certgen-v1.1.0.tar.gz

3、创建pod 1 2 3 4 5 6 7 8 9 10 [root@master1 ~]

4、在node1与node2节点上安装nginx与keepalive 1 2 3 4 [root@node1 ~]

5、更改nginx配置文件(node1与node2节点一致) 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 user nginx;log /nginx/error.log;'$remote_addr $upstream_addr - [$time_local] $status $upstream_bytes_sent' ;log /nginx/k8s-access.log main;'$remote_addr - $remote_user [$time_local] "$request" ' '$status $body_bytes_sent "$http_referer" ' '"$http_user_agent" "$http_x_forwarded_for"' ;log /nginx/access.log main;

6、配置keepalived主备配置文件 主:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 global_defs { "/etc/keepalived/check_nginx.sh"

备:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 global_defs { "/etc/keepalived/check_nginx.sh"

7、check_nginx 1 2 3 4 5 6 7 8 9 10 11 12 13 cat > /etc/keepalived/check_nginx.sh <<END #!/bin/bash counter=\`netstat -tunpl | grep nginx | wc -l\` if [ \$counter -eq 0 ]; then service nginx start sleep 2 counter=\`netstat -tunpl | grep nginx | wc -l\` if [ \$counter -eq 0 ]; then service keepalived stop fi fi END

8、创建ingress-deploy.yaml 1 2 3 4 5 6 7 8 9 [root@master1 ~]

9、通过keepalived+nginx实现nginx-ingress-controller高可用 1 2 3 true

10、测试ingress HTTP代理k8s内部站点 1、编写tomcat-service文件 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 [root@master1 ingress]

2、编写tomcat-pod文件 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 [root@master1 ingress]

3、编写Ingress文件 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 [root@master1 ingress]"nginx"

4、测试是否七层代理成功 通过deployment+nodeSelector+pod反亲和性实现ingress-controller在onode1和onode2调度

1 2 3 4 5 79254@ZY C:\Users\79254>curl tomcat.zy.com -I

2、同一个k8s搭建多套ingress-controller ingress可以简单理解为service的service,它通过独立的ingress对象来制定请求转发的规则,把请求路由到一个或多个service中。这样就把服务与请求规则解耦了,可以从业务维度统一考虑业务的暴露,而不用为每个service单独考虑。

在同一个k8s集群里,部署两个ingress nginx。一个deploy部署给A的API网关项目用。另一个daemonset部署给其它项目作域名访问用。这两个项目的更新频率和用法不一致,暂时不用合成一个。

为了满足多租户场景,需要在k8s集群部署多个ingress-controller,给不同用户不同环境使用。

主要参数设置:

1 2 3 4 5 6 containers:

注意:–ingress-class设置该Ingress Controller可监听的目标Ingress Class标识;注意:同一个集群中不同套Ingress Controller监听的Ingress Class标识必须唯一,且不能设置为nginx关键字(其是集群默认Ingress Controller的监听标识);

创建Ingress规则:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 apiVersion: networking.k8s.io/v1"ngx-ds" "ngx-ds"

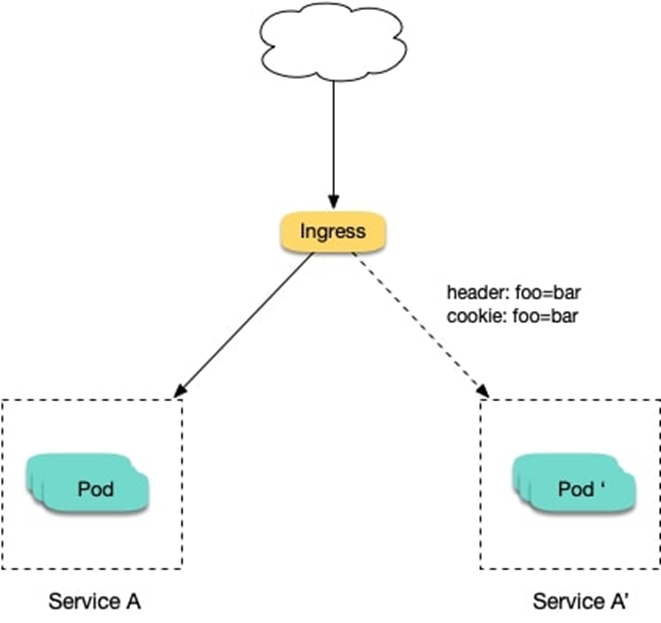

2、通过Ingress-nginx实现灰度发布 1、将新版本灰度给部分用户 假设线上运行了一套对外提供 7 层服务的 Service A 服务,后来开发了个新版本 Service A想要上线,但又不想直接替换掉原来的 Service A,希望先灰度一小部分用户,等运行一段时间足够稳定了再逐渐全量上线新版本,最后平滑下线旧版本。这个时候就可以利用 Nginx Ingress 基于 Header 或 Cookie 进行流量切分的策略来发布,业务使用 Header 或 Cookie 来标识不同类型的用户,我们通过配置 Ingress 来实现让带有指定 Header 或 Cookie 的请求被转发到新版本,其它的仍然转发到旧版本,从而实现将新版本灰度给部分用户:

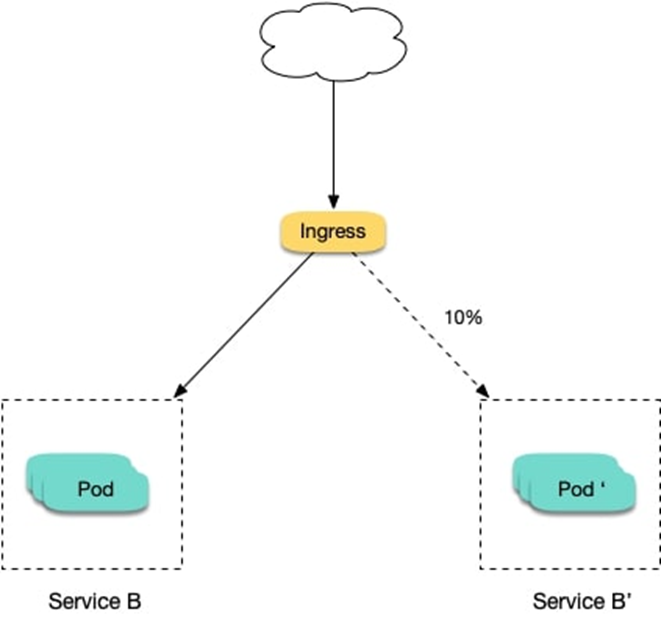

2、切一定比例的流量给新版本 假设线上运行了一套对外提供 7 层服务的 Service B 服务,后来修复了一些问题,需要灰度上线一个新版本 Service B,但又不想直接替换掉原来的 Service B,而是让先切 10% 的流量到新版本,等观察一段时间稳定后再逐渐加大新版本的流量比例直至完全替换旧版本,最后再滑下线旧版本,从而实现切一定比例的流量给新版本:

Ingress-Nginx是一个K8S ingress工具,支持配置Ingress Annotations来实现不同场景下的灰度发布和测试。 Nginx Annotations 支持以下几种Canary规则:

假设我们现在部署了两个版本的服务,老版本和canary版本

基于Request Header的流量切分,适用于灰度发布以及 A/B 测试。当Request Header 设置为 always时,请求将会被一直发送到 Canary 版本;当 Request Header 设置为 never时,请求不会被发送到 Canary 入口。

要匹配的 Request Header 的值,用于通知 Ingress 将请求路由到 Canary Ingress 中指定的服务。当 Request Header 设置为此值时,它将被路由到 Canary 入口。

nginx.ingress.kubernetes.io/canary-weight 基于服务权重的流量切分,适用于蓝绿部署,权重范围 0 - 100 按百分比将请求路由到 Canary Ingress 中指定的服务。权重为 0 意味着该金丝雀规则不会向 Canary 入口的服务发送任何请求。权重为60意味着60%流量转到canary。权重为 100 意味着所有请求都将被发送到 Canary 入口。

nginx.ingress.kubernetes.io/canary-by-cookie 基于 Cookie 的流量切分,适用于灰度发布与 A/B 测试。用于通知 Ingress 将请求路由到 Canary Ingress 中指定的服务的cookie。当 cookie 值设置为 always时,它将被路由到 Canary 入口;当 cookie 值设置为 never时,请求不会被发送到 Canary 入口。

3、模拟部署生产测试版本web服务 1、部署v1版本 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 [root@master1 deploy_web]"openresty/openresty:centos" local /openresty/nginx/conf/nginx.conf' local header_str = ngx.say("nginx-v1") ' ;type : ClusterIP

2、部署v2版本 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 [root@master1 deploy_web]"openresty/openresty:centos" local /openresty/nginx/conf/nginx.conf' local header_str = ngx.say("nginx-v2") ' ;type : ClusterIP

3、创建一个ingress,对外暴漏服务,指向v1版本的服务 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 [root@master1 deploy_web]"nginx"

4、访问验证ingress 1 2 [root@master1 deploy_web]

创建 Canary Ingress,指定 v2 版本的后端服务,且加上一些 annotation,实现仅将带有名为 Region 且值为 cd 或 sz 的请求头的请求转发给当前 Canary Ingress,模拟灰度新版本给成都和深圳地域的用户:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 [root@master1 deploy_web]"true" "Region" "cd|sz"

6、基于 Cookie 的流量切分 与前面 Header 类似,不过使用 Cookie 就无法自定义 value 了,这里以模拟灰度成都地域用户为例,仅将带有名为 user_from_cd 的 cookie 的请求转发给当前 Canary Ingress 。

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 [root@master1 deploy_web]"true" "user_from_cd"

7、基于服务权重的流量切分 基于服务权重的 Canary Ingress 就简单了,直接定义需要导入的流量比例,这里以导入 10% 流量到 v2 版本为例

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 [root@master1 deploy_web]"true" "10"